In a few other entries, I’ve toyed with GPS, either getting or parsing the data with Bash, assessing or using the GPS data. However, when we use GPS, we suppose that the precision varies by brand and model&mdashsome will have greater precision—but our intuition tells us that two GPS devices of the same brand and model should perform identically. That’s what we’re used to with, or at least expect from, computers. But what about USB GPS devices?

So I got two instances of the same model+brand GPS. Let them call them GPS-1 and GPS-2. Do they perform similarly?

The first thing is to gather data. Just getting a few data points won’t work for what I have in mind. Using a sophisticated cat directly from the device port, I grabbed data for a day, once for each device. From the exact same location.

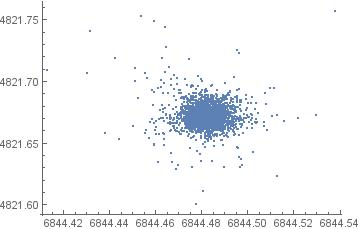

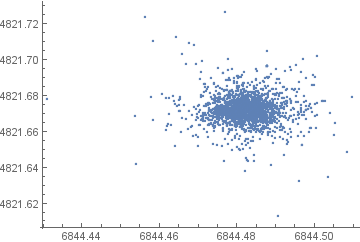

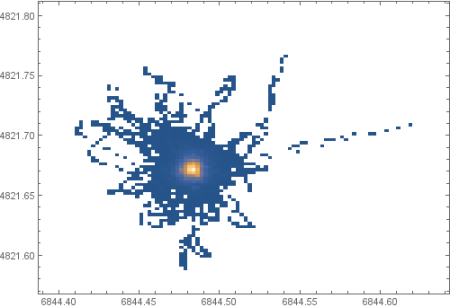

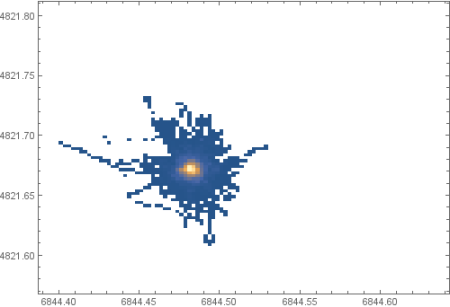

The first series of plots show the dispersion of the given positions for GPS-1 and GPS-2:

The following (fat) animated GIFs show how the position varies:

Estimated density function shows that the readings are centered, and not too spread:

Cursory comparison show that the two GPS do not perform identically. However, is the difference statistically significant? Are the position normally distributed? If so, that is the standard deviation?

To be continued…